I don’t hate AI. That’s pointless. I hate the people who use AI to ruin everything, which is the majority of AI users today.

I think you’re being too literal, they mean they hate having to use it or they hate being constantly exposed to its shitty output. Obviously pretty much nobody hates, like, Markov chains.

Yeah, it’s the latter for me. Windows adding it to Notepad was the final straw.

I also hate the term AI.

And I’m not sure about the actual code either.I had an idea for a SciFi story I wanted to write where a person’s consciousness is uploaded into a computer. Now I can’t even trito it without feeling gross because LLMs ruined everything for me, even AI scifi.

In fairness, uploading consciousness into a computer is a pretty old sci-fi trope so it would have been derivative even before the AI bubble. That being said, tropes are tropes for a reason, don’t let shitty real life “AI” stop you from writing that story. Just don’t call use the term artificial intelligence and you’re good

Reminds me of one video game,

…which is a medium spoiler for the game itself

Pretty much, but that one took it to whole new levels.

Thats Tron inspired, not AI. Boom, you are around it.

I reserve the hate (well, severe disdain and contempt, hate is personal in my book, haven’t needed it for quite a while) for the C-Suites and owners, users get contempt if they’re using it to think for them and a pass with some sympathy if they’ve found a way to use it as a tool while retaining executive function. LLMs and broader machine learning are fine, just a tool. You can use a wrench constructively or give someone a concussion, that’s on you.

SamA is the exception, hate that market cornering fucker (and yes it’s personal, I was going to go AM5 this year).

Man, I loved playing around with GPT2, I was hyped about GPT3, I paid for AI dunegon and NovelAI, I was so stoked about all of this. When ChatGPT came out, I was like “y’all are just learning about it ?” but I was so happy that this tech I loved was getting recognition.

I’m definitely in the “AI hate” camp now, this really is the worst timeline

I’ve worked with AI, off and on, for over 20 years now. The thing is, I would argue that it’s always been the same. The point of AI has always been to take the place of humans. It has always been a scary line of research with profound implications for the future.

The biggest difference now, I would argue is the accessibility. It used to be that only academics and experts could use it, but now that it uses a natural language interface, any dumb assholes and any bad actors can easily use it. And they do. In droves.

I hate AI. I don’t hate LLMs, SLMs, generative models, etc… but the marketing campaign buzzword that’s currently making hardware unattainable by average consumers and accelerating tracking, canvassing and profiling?

Absolutely hate it - with a vehement passion.

The fuck are all these comments? AI is shit, fuck AI. It fuels billionaires, destroys the environment, kills critical thinking, confidently tells you to off yourself, praises Hitler, advocates for glue as a pizza topping. This tech is a war on artists and free thought and needs to be destroyed. Stop normalizing, stop using it.

Separate LLMs and AI.

LLMs are shit, fuck LLMs. They fuel billionaires, destroy the environment, kill critical thinking, confidently tell you to off yourself, praise Hitler, advocate for glue as a pizza topping. This tech is a war on artists and free thought and needs to be destroyed. Stop normalizing, stop using it.

And AI is a pipe dream no one is close to fulfilling, won’t be realized by feeding LLMs all of the data in existence, and billionaires are destroying our economy in their pursuit of it.

You are referring to AGI not AI.

The broad category of AI is most definitely real.

Could you define that category? Or give us an example of a programme that fits under it and one that doesn’t?

AI contains LLMs and Machine learning and AGI.

My main point is that you shouldn’t throw out computerised protein folding and cancer detection with your hatred of LLMs.

OK, and my point is that people are using the term “AI” so loosely as to be indistinguishable from “algorithm”.

We’ll still have the statistical protein folding models after this bubble eventually pops, we’re just not gonna call it “AI”. It’s a trendy marketing department word, and its usefulness as a description in Computer Science is rapidly diminishing.

I would say it just got widespread use, I definitely heard of MS Word doing autofill as ‘AI’ at the time when deep learning was freshly invented thing. People tried to label a lot of things ‘AI’, with LLMs the label just stuck better

overheard, rumored, etc: advances in ai are then quickly popularized, to the point where they’re no longer thought of as ai. then people look at the main ai field, and think “why haven’t they done any ai work?”

AI to a layman just means “LLMs and Generative AI that rich assholes keep trying to force me to use or consume the output of”. i dont think its worthwhile to split semantic hairs over this. call the “good” stuff CNNs or machine learning if you really feel the need to draw a distinction.

To a layman, yes I agree.

Not many laymen on lemmy. We can afford to be precise with our language.

Stuff like ML, Computer Vision, Alpha Fold?

example of some that fit under it: imagenet classifiers (they’re people too!), uhmm

example of some that don’t: chatgpt

Doesn’t work that way unfortunately. Ask a person on the street what AI is and theyll tell you whatever flavor slop generator they’re familiar with. You’re not going to see much pushback on ML around the Fediverse.

On the fediverse I think we can be more precise in our language.

Change this out for any other technology that’s been innovated throughout human history. The printing press semiconductors the internet.

The anti-ai rhetoric on this platform is becoming nonsensical.

At this point it’s just bandwagon hate. These people don’t even understand the difference between llms and AIs and the various applications that they have.

Any other technology? How about 3D TVs, smart glasses, blockchain, NFTs, the Metaverse?

Yes. These all qualify. They’re all massively successful technologies.

Well, aside from 3D TVs and smart glasses. But they’re generally innocuous. Yes I also understand that smart glasses es have privacy issues but then again in this day and age what doesn’t.

If you think any of these are massively successful, I question what reality you are living in.

The blockchain nfts the metaverse aren’t successful?

These three things generate massive amounts of revenue. The metaverse especially is a billion dollar IP.

The word success doesn’t have a positive connotation to it in this case.

The metaverse had a billion dollars pumped into it, and yet for all they money they spent they have literally no users to show for it. Likewise for NFTs, a few idiots got suckered into paying for monkey JPGs and are now left holding a bag that no one wants.

The blockchain has a small cult trading money back and forth to make it look bigger than it really is. But it’s never achieved any kind of mainstream adoption as the currency true believers keep insisting it will be. And it never will, because it’s way too inefficient to ever scale.

Blockchains in an age of Trump choosing a new Fed chair after trying to have Powell arrested.

Trust your government over software and cryptography, which has no basis in reality outside of the laws of physics and mathematics.

Figured I’d summon at least one person trying to defend crypto. Just because the US has issues doesn’t suddenly mean crypto is good.

Bitcoin has been around for almost two decades now, and still has not achieved anything beyond being a means for speculators to try and fleece each other. If it hasn’t reached widespread mainstream adoption by now, it never will.

Crypto is a failed technology, full stop.

Gold is also just digging something up and then re-burying it. If it hasnt replaced fiat then why are people buying it, why has it been going up 100% a year recently when theres no new industrial demand for it?

Its fine to not hold it, but all finite assets have some intrinsic value, because fiat keeps pumping via new debt issuance, which is inevitably debased. Like it was during Covid, or 2008, or 2001, etc…

Crypto has a higher volatility, but can have a higher return, and is more closer correlated to the Nasdaq; like all assets its generally efficiently priced. I’d say its closer to TQQQ than it is to VT or gold, which may be suitable for 1-10% of a portfolio depending on goals and risk tolerance. If they drop interest rates quickly to pump the stockmarket TQQQ and Bitcoin would likely both rise dramatically.

I feel like you just autopiloted into random cryptobro talking points that have nothing to do with the conversation. I don’t care if you like crypto, the reality is that rest of the world has already rejected it and moved on.

If the US dollar goes through hyperinflation and becomes worthless, people in the US won’t switch to Bitcoin or other crypto as their main form of currency. We’ll do exactly what citizens of every country that experiences such a currency crash does - start using other more stable currencies. You would see businesses start accepting a mix of Canadian dollar, Mexican pesos, Euros, and Yuan.

I’ve contemplated this myself, about competing currencies, and how that would leave the world if we had cheap and ubiquitous FX with little to no drag. Would it not cause a race to the bottom for inflation targeting, and lead to something similar to everyone using a fixed currency?

Why would I hold Canadian dollar or Pesos if their inflation target is 2% versus say the Swiss 1%? Is there enough new money supply for everyone to even attain the lowest inflation currency, or do they bid down the denomination as that countries FX value rises?

Why would I hold Canadian dollar or Pesos if their inflation target is 2% versus say the Swiss 1%?

No. No it wouldn’t.Because ultimately you (assuming you’re in the US), have to pay your taxes in USD. People say that fiat currencies aren’t backed by anything, but that isn’t true. They’re backed by the fact that every single US citizen and resident has to gather up thousands of dollars every year and pay them to the government. Even if you could convince your employer to pay you in Euros, the IRS will still demand you pay whatever taxes you would owe if you were paid in an equivalent amount of dollars.

deleted by creator

software and cryptography, which has no basis in reality outside of the laws of physics and mathematics.

i don’t know if you’re joking or not, but yes you are

Sorry don’t remember any of those other technologies using so much resources, raising prices for everyone else as they don’t pay the actual cost. And being wrong about stuff.

Bitcoin and Ethereum PoW used resources and raised (GPU and electricity) prices for everyone.

They literally killed and excommunicated people after the invention of the printing press for producing unauthorized copies of the Bible. Figures like William Tyndale paid with their lives for translating scripture into English, challenging the Church’s authority.

There is illicit material circulating freely on Tor, demonstrating that technology can distribute both knowledge and criminal content.

Semiconductors underpin some of humanity’s most powerful and destructive technologies, from advanced military systems to cyberweapons. They are a neutral tool, but their applications have reshaped warfare and global power dynamics.

You are fully entitled to dislike AI or technologies associated with it. But to dismiss it entirely is ignorant. Whether you want to believe it or not, we are on the precipice of a technological revolution, the shape of which remains uncertain, but its impact will be undeniable.

Bullshit, fuck your false equivalency. This tech is good at generaating slop, propaganda, and destroying critical thinking. Thats it. It has zero value.

Ok. This is clearly rage bait.

You’re an ignorant fool and I’m probably not the first person to tell you that.

Fuck off and go enjoy your slop, bot

You know what, fuck you and your bullshit holier than thou attitude.

You’re a stupid piece of shit that will never amount to anything worth while other than being a sweat lord mod on your own Lemmy sub literally called “fuck ai”.

Literally a sex bot programed by a Russian propaganda mill has more original thought than you.

Seriously dude. You’re a cunt.

mmm, not just “propaganda mill”, but “Russian propaganda mill”?

oh, and you just looked at their profile after being demolished (with no prejudice)?

i mean, if by “this tech” you mean machine learning in general, then no, it has been used for good purposes(?), but if you mean this tech then absolutely

wdym “change this out”? with what??

The internet printing press firearms semiconductors nfts the blockchain.

A multitude of other technologies.

oh man i meant “change what”. change what part to that?? there’s ~two parts and i don’t know what you’re talking about

So what is AI in your opinion because LLMs fall under that umbrella.

My opinion. AI is a way to improve a computer models accuracy over time based on new data.

I could even argue that ChatGPT etc. are not AI because the LLMs are not directly learning from the inputs they are receiving.

yes they are!! do you know what the “T” means?? trained (edit: [loudly incorrect buzzer])!! over time!! from data!!

if you really want to be pedantic, chatgpt is ai!! there’s rlhf, yaknow??r/confidentlyincorrect

The G means Generative

The P means Pre-trained.

The T means Transformer.

It is not learning directly from its users, although the planned state-full amazon infrastructure will likely change thos.

well darn

well you know what they (me i use they/them pronouns) say is that being confidently wrong is essential for intelligence

Cunningham’s Law states “the best way to get the right answer on the internet is not to ask a question; it’s to post the wrong answer.”

Which ai and for which use? It’s a tool. It’s like getting mad cause a guy invented a hammer. It’s not the tool hurting you dude, it’s the people wielding it.

If that hammer also had massive environmental impacts and hammers were pushed into every aspect of your life while also stealing massive amounts of copyrighted data, sure. It’s very useful for problems that can be easily verified, but the only reason it’s good at those is from the massive amount of stolen data.

Arguably, hammers also have a massive impact on the environment. They are also part of everyday life. Building you live in? Built using a hammer. New sidewalk? Old one came out with an automatic hammer. Car? Bet there was a type of hammer used during assembly. You can’t escape the hammer. Stop running. Accept your inner hammer. Embrace the hammer, become the hammer. Hammer on.

All those things you said are vague and nebulous and every day people are not gonna understand that message and will just think you’re hysterical or a conspiracy guy. The way the message is put forwards is super important

If an everyday person asked me why I don’t like ai I would show them those reasons in more depth, but on lemmy most people have seen the articles about ai water use and the light/noise/water pollution of data centers.

Is the hammer making nude images of children?

A camera can, ban cameras

Yup. you can use a chisel or even just a hammer, you just need the right person with it

Same with the internet. Fuels billionaires, destroys the environment with data centers and cables, kills libraries and textbook research, spreads nazi propaganda. We need to stop using technology in general.

deleted by creator

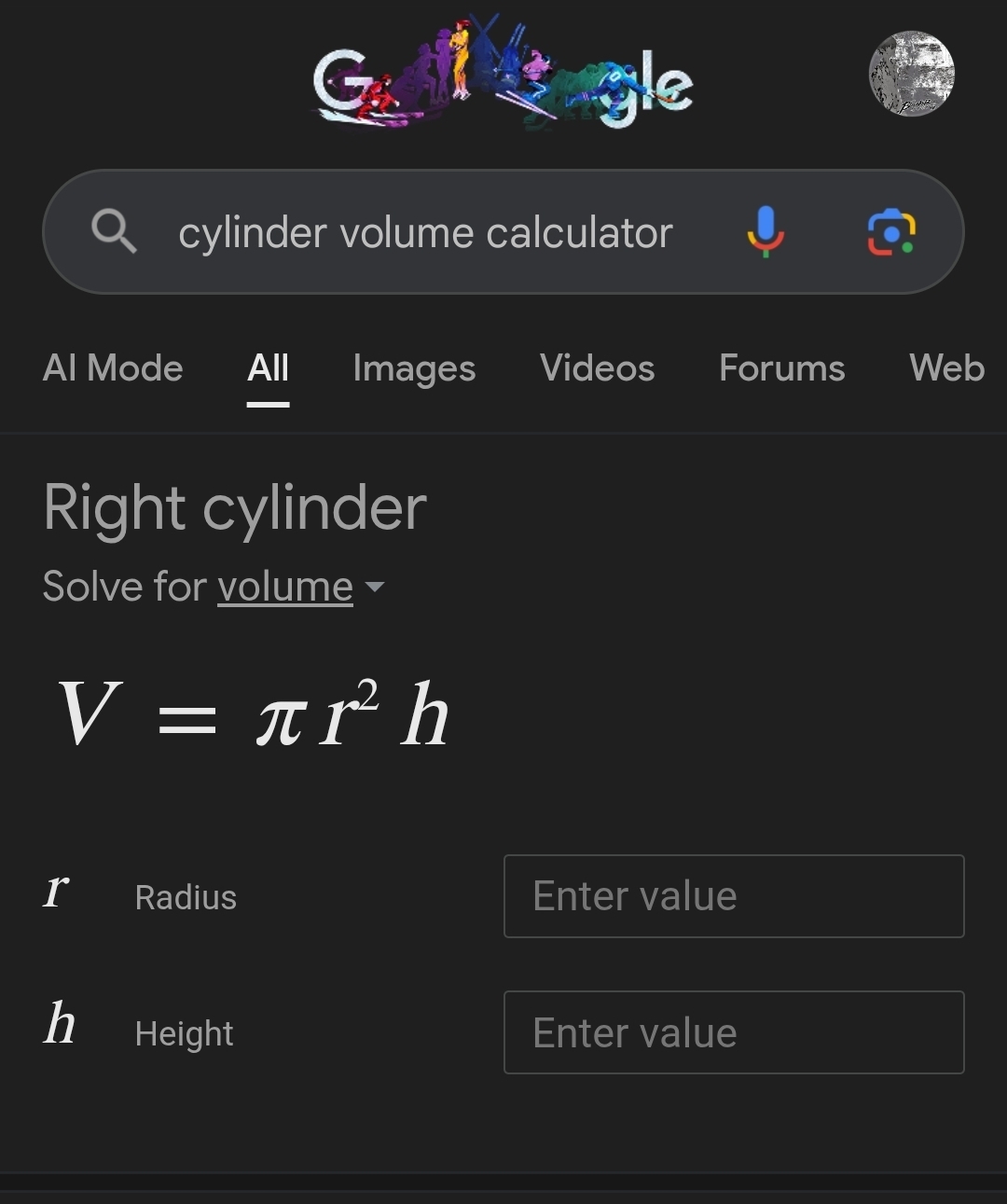

Lets see a standard problem I’m randomly making up using a free AI, you tell me if this kind of thing can be useful to someone:

If I have a bucket that is 1 meter tall and 1 meter wide how much volume can it hold?

The volume V of a cylinder can be calculated using the formula:

V=πr2h

Where:

r is the radius, h is the height.

In this case, the bucket is 1 meter tall and 1 meter wide, which means the diameter is 1 meter. Therefore, the radius r is:

r=21 meter=0.5 meters

Now substituting the values into the volume formula:

V=π(0.5m)2(1m) V=π(0.25m2)(1m) V≈0.7854m3

Thus, the volume the bucket can hold is approximately 0.785 cubic meters.

Using llms for math questions is probably the worst usage for llms.

And all of this is easily calculated without ai. You can literally google it and let google do the math for you without ai.

Perhaps your right, though the AI also allows natural language or voice, and further explanations.

When you visualize a cylinder, think of stacking many thin circular disks (each with a height Δh) to build up the height h. The volume of each individual disk is its area πr2 multiplied by its infinitesimally small height Δh. When you aggregate these over the full height h, you arrive at the volume of the cylinder.

Its also eroding all the bullshit we used to do, like cover letters and things that had no reason to exist besides wasting someones time. So truth be told I’m a fan, even if it is a massively unprofitable bubble, I also recognize its limitations given its hallucinations so I understand it shouldnt be relied upon for useful work.

I won’t argue about the value of explanation from a

lyinghallucinating machine.But I like how your use case is “it does the things that I believe to be useless and time wasting for everyone involved. But instead of, pushing for the end of these time wasting acts, I waste a little less time with llms (instead of all of the time by not doing these time wasting acts) while still wasting the time of the reader.” What an efficient use case! We should violate IP law, waste drinking water and energy for it!

The problem is many people liked how it was, it makes more work to do, makes it seem official. I believe in that book bullshit jobs, and think most people are winging it with performative bullshit.

What I saw recently at my work is people received something that looked like AI slop from the head boss and they laughed about it, which got back to the boss, who then defended himself that it wasn’t AI.

So I’m hopeful that people are called out for wasting peoples time, and that long winded blobs of meaningless text become a firable offense.

“IP law”? IP is not a good term. it suggests that ideas are property??

you see companies mining everything possible, such that dirt just goes <poof>! and your first thought is “oh no, the landowners had the right to those minerals! they should’ve bought a permit first!”(?)

deleted by creator

Thats well put, I’m under no naive assumption that LLMs are AI. Though I do think youre discounting the usefulness, as it did give the right answer, which is a fine use for average people doing basic math or whatever project theyre working on. I’m under no delusion that its replacing workers, unless someones job is writing fancy emails or building spreadsheets, and I do still think its a massive bubble.

deleted by creator

Found the Mennonite.

The fuck are all these comments? The internet is shit, fuck the internet. It fuels billionaires, destroys the environment, kills critical thinking, confidently tells you to off yourself, praises Hitler, advocates for glue as a pizza topping. This tech is a war on artists and free thought and needs to be destroyed. Stop normalizing it. Stop using it.

t’s the same as any other commercial tool. As long as it’s profitable the owner will continue to sell it, and users who are willing to pay will buy it. You use commercial tools every day that are harmful to the environment. Do you drive? Heat your home? Buy meat, dairy or animal products?

I honestly don’t know where this hatred for AI comes from; it feels like a trend that people jump onto because they want to be included in something.

AI is used in radiology to identify birth defects, cancer signs and heart problems. You’re acting like its only use-case is artwork, which isn’t true. You’re welcome to your opinion but you’re welcome to consider other perspectives as well. Ciao!

The use in radiology is not a good thing. Hospitals are cutting trained technicians and making the few they keep double check more images per day as a backup for AI. If they were just using it as an aide and the humans were still analyzing the same number of picturea that would be fine but capitalism sees a way to save a buck and people will die as a result.

This isn’t a problem with AI though, it’s a problem with the people cutting trained technicians. In places where such incompetent people don’t decide that, you get the same number of trained technicians accepting (and being a part of) a change that gives them slightly more accurate findings, resulting in lives being saved overall. Which is typically what health workers want to begin with.

That big ol list of things didn’t do it for you, huh?

That sensationalized list? No, not really.

I honestly don’t know where this hatred for AI comes from

Did you try reading the comment you just replied to?

It’s in part because people aren’t open to contradictions in their world view. In part you can’t blame them for that since everyone has their own valid perspective. But staying willingly ignorant of positives and gray areas is a valid criticism. And sadly there are plenty of influencers peddling a black-white mindset on AI, ignoring all other uses. Not saying intentionally or not, again perspective. I’m sure online content creation has to contend with a lot more AI content compared to the norm. But only on the internet do I encounter rabidly anti AI people, in real life basically nobody cares. Some use it, some don’t, most do so responsibly as a tool. And I work in the creative industry…

“I’ve never seen it it must not exist”

I work in a creative industry too and it is the bane of not only my group but every other company I’ve spoken to. Every artist and musician I know hates it too.

I never said it doesn’t exist. I’m sorry people in your area are being negatively affected if so. But the point still stands. My experience is just as valid.

“in real life basically nobody cares”

“My experience is just as valid”

really?

I’m pretty anti AI as it is a tool of the billionaire class to enslave the masses. Look up TESCREAL, its the digital eugenics billionaires and fringe philosiphers believe in and it is the driving force in the AI push.

That being said I can see a use for a focused, local LLM/AI assistant. I have to search a lot of confidential technical manuals, schematics and trust cases in my job. We are thinking about testing out Ollama to upload all our documents too to make searching them easier.

You are the exact person I didn’t mean 😄 the first is a very valid reason to dislike AI.

Even before our current time, “nobody cares” is not a thermostat reading of what “really matters”. It almost sounds like you believe people know what’s best for themselves, when the truth of the matter is that humanity has long proved otherwise.

You sound like a cartoon supervillain, Lex Luthor ranting to superman about the common animals not knowing what’s best for themselves.

Republicans.

Have you seen American society lately?

I don’t believe that. What I’m saying is that these are all people I work with look very critically and skeptically at the world, as that’s pretty much an inherent requirement for the creative industry. We all know what AI is and what it does, and most arguments against it hold no water to people with a realistic view of the industry to the point it simply cannot be black and white like some claim it to be.

There are a few good reasons to dislike AI, but those don’t apply to all of AI. Some are value based, and other people have other values that are equally valid. And some can be avoided entirely. Like how you could ship packages with a coal rocket instead of a train on electricity, or just shipping less packages to begin with.

There is trust and experience between one another in the industry that we aren’t using it unnecessarily, wastefully, and incorrectly, and AI is not anywhere near a requirement by consumers nor healthy minded businesses.

Look up dot com bubble. We still have the internet. Just because AI is over-hyped and in a bubble doesn’t mean it won’t still have uses.

I fully agree. I still remember the time when using Photoshop was seen by some as not being “real artist”, because “any idiot with a mouse can draw now”. I’m not under any illusion this will last forever, the negative sentiment is boiling because of the bubble and it’s negative externalities, not by the technology itself. So once that bursts, things will hopefully be a lot more peaceful.

machine learning can be useful in limited cases, but is not to be trusted. agentic ai has to go. computers are not creatures, and thinking otherwise is a bad mistake.

k

OK so why is AI so big right now because it isn’t profitable. Even there most expensive tier is losing them money. Then you have the data centers getting breaks on electricity so the rest of us cost goes up to make the difference. Where is this magical profitability that is driving AI.

So do computers.

How does AI fuel billionaires?

who owns the datacenters?

Why is that relevant? AI is a massive money loser.

they have somekind of plan, or maybe its all sunken cost scenario. Either way, they think they can get some benefit from it and they are so determined they are throwing insane amount of money in it even though there is no clear way to get any profit from it. So either they know something we dont or they are desperate to save their investments -> worse ai does, better its for all of us since once ai crashes the components stop being wasted on it, less electricity and materials are wasted on datacenters and best of all, all those fucking billionaires lose a lot of money they have invested or at least the investors who thought it good idea to support them lose and maybe dont do it again.

Just because they have a plan doesn’t mean it’s a good one or that it will work.

AI doesn’t fuel billionaires, it drains their money.

yes, but i dont think billionaires are THAT dumb. They see some value in it for them that they deem worth the risk of losing all that money. So that is why its even more important that the ai crap fails and continues to drain their money.

Or maybe i’m underestimating just how much money they have and maybe even all this is just akin to losing a large portion but it doesnt matter because they can just exploit everything else… But, if they get what they want then its bad for all of us no matter what.

It baits investors into giving them money, mainly.

Everyone’s losing money on the deal, it’s not like the billionaires are cleverly making money on AI while everyone else is losing money.

I find AI to be more reliable every day. Fail to see an issue of killing critical thinking. Also my experience, search engines are flooded with advertisements and garbage unrelated to my search. Can only hope the business world does not “Shittify AI in the same way.

70 years ago, it was predicted pay-television would replace advertisements. Instead television evolved to a fee based system and a higher ratio of ads. So you can bet a good thing will evolve in the same way.

Some people I work with when they do an internet search they go straight to the ai info. Literally read out the first bit of what’s there and take that as the truth.

Great way to make yourself immediately untrustworthy

I constantly catch people that supposedly check sources after using chatbots not doing it.

Do I love my 4-year-old? Yes

Would I let my precocious 4-year-old full of imagination write my business report? Fuck no. Are you stupid or what?

McKinsey isn’t exactly stupid, its amorality run amok and a culture of cutthroats.

If you’ve ever worked with consultants or managers in general, like 50-75% of them are fucking stupid. Just because they can convince other idiots that they’re not, doesn’t mean they aren’t. I’ve watched the blind lead the blind into financial ruin, while getting paid big bucks to do it.

I don’t believe the people who contract them are being duped though. They do it to delegate and dilute the chain of responsibility until their decisions become acceptable.

I think it’s a bit of both. Sometimes the people hiring them are truly clueless. The kinds of reports that management consultants make seem really well thought out and intelligent. Other times, upper management wants to make a big decision, and they think it’s the right one, but they need something to show they considered all the alternatives and that an outside source agrees with them.

Also, management consultants are very stupid, but they’re clever in a very narrow area. That’s why they succeed with upper management, because like LLMs, upper managers think they’re clever.

As a former consultant and manager, I wholeheartedly agree, but your % is too low. The culture of consulting is poison and makes monsters out of people.

Here’s a great video about what they did to disneyland https://youtu.be/Q7pgDmR-pWg

And then everyone clapped.

Tears in their eyes

The name of those tears? Albert Einstein.

And then everyone applauded.

People around me use AI all the time to get answers to generalized topics. More and more they use it like a search engine / information augmentation system.

They are not technical people. They mostly know that the information needs to be double checked and might be wrong. But usually take it at face value if the importance is low.

Honestly this is about what they did before. They would search Google, click on the first blog, skim it, and repeat until getting some answer they believe.

I too use AI regularly for brainstorming, quickly summarizing massive text messages, and reformatting text from a jumbled mess into something more cohesive, etc.

I don’t love it or hate it. In some cases it saves a lot of time and is useful tool. In other cases it outputs trash that we cannot use for any serious case.

Just like a hammer or a shovel, it’s a tool. Can be used the right way and it can be used the wrong way.

I think of an LLM as extraordinarily lossy compression. All the training data is essentially encoded in the model. You can get an approximation of the data back out again with the right input.

I don’t think it’s any less reliable that random blogs on the web, and I don’t have to wade through SEO tripe either.

The annoying thing though is that all the random blogs on the web are written with using these LLMs now. It makes it much harder to be critical of your sources, because they’re all coming from a unnamed, proprietary LLM with no information about who owns it or the training data. At least before, I could look up the user or check out their other articles, now every article is randomly generated from some unknown prompt.

I would argue this isn’t only a bad thing though. Even before AI, many bogus articles and information existed. Eg. that people swallow spiders in their sleep, which many outlets parroted.

I would guess most people never checked (m)any sources on most information they found so long as the ‘vibe’ felt trustworthy. There is no cure to make reality simple, and the more pressure we have to teach people to think critically, the better.

- AI is much better at creating internet spam.

- AI is a vector for even reputable places to “set and forget” any article they’re in charge of. Any mistruths are simply ‘glitches’.

- The pressure on people to think critically only matters if people actually start thinking critically. Kids use this technology to skip their homework.

No disagreement here. I’m simply saying because you are more likely to be misled now than ever, being lazy about it isn’t an option anymore, and teachers can use that fact to drive the point home stronger. In the past if you were lazy about checking sources and verifying information, chances were much higher you still got somewhat valid information that didn’t harm your life down the road. Now you might just hurt yourself by putting glue on your pizza. Not saying I desire that, but the consequences of intellectual laziness have never been bigger, so the emphasis on teaching understanding must match that, since the alternative is being taken advantage of.

#3 is very important, as this is the core thing a school should teach. But lets not kid ourselves that kids weren’t cheating their way out of homework since the start of time 😄

But lets not kid ourselves that kids weren’t cheating their way out of homework since the start of time 😄

I don’t mean to come off as too aggressive because I don’t think we’re really arguing with each other. But, I tend to see statements like this as a kind of handwaving apologia for something that, to be clear, real people are doing to us on purpose. The same way that people might lament the coming of a hurricane season; nothing really to be done about it.

It can certainly be used for that, I will admit. But no that isn’t my intention. I hear many good stories on that front of teachers that have gotten a really good nose for AI and are using it as learning moments for their students. The world is filled with ways to cheat, and teachers are well aware of that. In the end, the process to unlearn them from cheating with AI is the same as cheating in conventional manners, is all I’m saying.

That’s what makes them shitty though.

When I have a hard technical problem I often search for and read through a dozen different sources. Many of them are wrong, or are right but not covering exactly the situation I’m looking at. Eventually I’ll find one that’s either right and answers my problem, or gives me the clue I need so I can figure out the solution for myself.

If I ask an LLM to solve the problem, it will make up an answer that would seamlessly blend in with all its training data. In other words, it’s most likely to produce something that’s wrong, or something that’s right but not for my particular case, or something that’s close but incomplete. That’s effectively useless. At worst it blends in with its training data enough to convince me it’s right, while not actually being right. At best it’s something that is close enough to give me the clue I need. Most of the time it’s going to be something that’s wrong and I know it’s wrong because if it were that simple I wouldn’t have had to resort to the AI bullshit generator.

I’m sorry, but all the use cases you listed show that you’re just lazy. Stop it. It’s embarassing.

I’m lazy as fuck. I want to solve problems in the easiest way humanly possible. With the least amount of effort output.

What about you? Do you take the hard way?

Do you not cross reference multiple archived news articles and seek out past attendees to remind yourself of what Britney Spears wore at her last concert? smh

I’ll be real with you, I typed lazy but wanted to type idiot. Read your fucking emails Jesus Christ. You still have to check all the shit generative AI writes because it lies constantly. It’s very nature does not understand what it’s generating.

Hard to tell if you’re trolling or trying to add value to the conversation and just missing it.

A hammer doesn’t know what it is building but it is still useful.

This is the nature of tools: for some they improve output, for some they don’t.

Everyone’s a god damn tool philosopher.

Personally, I’m fine with banning cigarettes regardless of how responsibly my dead grandpa may have used them.

Obviously, don’t rely on them to read important emails for you. But so many things don’t need additional checking. We’ve all done at least a decade of schooling. We all know basic math, science, and history. When we forget things, all it takes is a small reminder to get it back. Our brains are capable of recognizing whether we’ve seen something before or not. We’re also capable of reasoning to determine whether something we read is consistent with everything else we know.

So many other things are also so unimportant that it doesn’t matter at all if you’re wrong. For example, some actor looks familiar, it lies to you about what film they were in, and you believe it. Is your life any worse off for it?

it lies to you about what film they were in, and you believe it. Is your life any worse off for it?

I think a better question is: why, then, am I asking it questions?

If I had a friend I knew was a notorious liar, I would—big chess move—simply stop asking him who actors are. Unless it was really funny.

If it’s a liar that lies every time or most of the time, then yeah, don’t bother.

why […] am I asking it questions?

I can’t actually think of any specific scenario where something is unimportant enough to not matter but important enough that you’d ask. What I was originally thinking of were actually scenarios where I planned to verify the information at a later time, but I mistook that in my head as not verifying it.

Yeah, fair enough.

The only time it’s happened to me is when gemini violates my eyes with its presence.

Any usage that isn’t massively more efficient than the not-llm way is unethical due to resource consumption. IE if a regular search engine would do the trick, using LLM just because you can is unethical.

I asked ChatGPT to review my resume and make changes tailored for the job description I was applying to (which I also gave it). Also told it that this was an internal position and not really an upgrade, but a sidestep that (I felt at the time) was more aligned with my long-term career goals.

I was really happy with the improvements it suggested.

Didn’t get the job, but as I understand it, the hiring manager wanted to bring in a friend of his from the moment he posted it.

In retrospect, I’m kinda glad that’s how it panned out. Myself and the new guy are operationally equal, he’s incredibly competent. We compliment each other well, and get along great.

And he’s friends with the big boss and thus has his ear.

The main reason I even applied to the job was because I wouldn’t want to work with anyone but myself in that job. And he’s close enough. .

I did get an interview for it, which ultimately just became a 1:1 with the boss and it gave us a chance to talk openly about where I see deficiencies that need fixing. All in all it went great. Six or so months later and I’m feeling a renaissance in the air at work. Like the things I talked about with him are now front-and-center and getting the attention they needed.

This company moves quite slowly, so six months (basically, new fiscal) seems incredibly quick.

It can be helpful for quickly summarizing a vast body of knowledge or a highly complex topic, to get a general overview and see which strings to pull further, as long as you don’t take everything at face value and understand that you still need to pull those strings yourself in order to acquire an understanding.

Like, if I suddenly wanted to learn computer programming, I wouldn’t know where to start. But querying an LLM can give me a general idea, define a few key terms and explain the difference between related concepts, without me having to browse through a hundred different tech blogs to answer all my questions in terms I can understand.

But I wouldn’t suddenly think I’m a computer programmer after doing that. I would have a better idea of where to start learning. I would be able to decide whether to focus first on object-oriented programming or functional programming, static or dynamic typing, declarative or imperative syntax, etc., instead of getting overwhelmed from the start just trying to learn the differences between those concepts.

It can also suggest resources for further learning, books or websites written by humans, links to open-source software that does what I’m trying to do, etc.

I wouldn’t expect it to write code for me, but it can be an efficient aid to self-learning and show me what programs and libraries to use for my intended purpose.

Or for astrophysics, for example. I wouldn’t expect it to give me an accurate breakdown of the engineering specs required to build a pair of O’Reilly cylinders at a Lagrange point, but it can suggest software for rendering prototypes or for simulating the forces that need to be accounted for.

That wouldn’t make me an astrophysicist, but it’s kind of cool that you don’t need to be one to learn about this stuff and tinker around in a field that’s so vast and technical as to be otherwise prohibitive for non-experts.

It also depends on the LLM of course. I think Mistral and Lumo are generally pretty okay at doing what I described above. Their algorithms aren’t corrupted by american venture capital, at least, so they have more incentive to give you an accurate response rather than being sycophantic and hugboxing.

and then everyone clapped

ChatGPT alone has nearly a billion daily active users. Even accounting for corporate types who are pressured to use it, saying that nobody likes it or wants it is delusional.

The closest figure I could find said that chat gpt sees around 800 million users per week, not per day. The per month statistics at the height of January 2026 was 5.72 billion visits. Which means about 185 millionish (rounding up) per day. The statistics often go from visits to users. I imagine users means unique users and visits can mean the amount of times anyone has accessed the website. Regardless all of this would mean a majority does not use chat gpt

And what’s going to happen when the VC funding dries up and they have to charge what it actually costs to run?

Yeah, the point where companies don’t want to run those services at a loss any more is going to be interesting.

First step will be that they’ll slap ads into it. Afaik stuff like that is already planned.

Regardless, it also means hundreds of millions of people use it and other AI tools on a daily basis. They’re not all being forced to at gunpoint. Trying to pretend that the opinions of one’s own social circle are “everyone” and that people who disagree do not exist is not a persuasive argument for anything.

I don’t think it’s “regardless” at all.

My point was that saying that “everyone hates this” and “nobody wants this” (what the shirt in the post says) is flatly untrue. Whether ChatGPT has a billion daily active users or 500 million weekly active users or whatever is just trivia that doesn’t really change the gist of what I’m saying. Lots and lots and lots of people really like AI tools and use them every single day. Ignoring that fact and pretending like everyone agrees with you is dumb.

Sam, please get off of the feddiverse, we don’t want AI here.

Ah yes, the other can’t-miss trick, “everyone who isn’t 100% on board with or mildly questions the hate train is a shill.”

Asking for less delusional anti-AI arguments isn’t AI boosting.

I just want to give props to you. I totally get your point but even through the negativity you stayed polite and tried to get your point across. Thank you for making the fediverse a better place.

This is the fediverse, you can’t really expect people here to check their hate.

Yes how dare you challenge our opinions!

Most people would take the statement as hyperbole. Also, the claim wasn’t against all AI use. It’s the shoving it everywhere that they say people hate. Even among those I know who use and like AI, they say it’s being shoved into things pretty arbitrarily and needlessly. Having it available to use is one thing. Ramming it down our throats is another.

True. Many of my colleagues are highly addicted clankers and I don’t think any of them like Copilot in Notepad, for example.

They’re not all being forced to at gunpoint.

No, but a lot of them are being ordered to do so by their employer.

If it’s something like Google or Windows that’s suddenly started generating AI “answers” or whatever when you just use your computer as normal, does that get counted in those statistics?

I bet it does, and I bet it accounts for a huge percentage of it.

Those probably won’t be counted if the number is ChatGPT users rather than LLM or genAI users. They’re a whole separate bunch of obnoxiousness instead!

[…] has nearly a billion daily active users.

So has Facebook, or opioids.

Your point is invalid.

Are you trying to claim that nobody likes Facebook? That nobody like opioids? You can say that they’re harmful, but that’s not the argument OP was making. His point still stands.

You mean the average person defending the stance “Everybody hates this thing” isn’t logical, or rational? SurprisedPikachu.jpg

Where did I say that was a good thing?

Using it specifically is, at least for me, something different than having it included in everything else. It’s everywhere, no matter if it actually offers real benefits to the user and that’s the issue.

The most ridiculous shit I have seen was when the lastest Gripen version was marketed as being AI powered.

For about 3 weeks I wasnt able to share documents with coworkers without sharing them with Copilot first…

Yep. Fediverse is in a bubble. People in general have no feelings about it. They don’t love it or hate it, they just use it. They have joked about how it gets stuff wrong until like a year ago and that’s an old joke now.

A lot of people here have passionate hatred about it and they project that it someone doesn’t hate it as much, they must love it. But the largest majority have no feelings towards it. It’s just a tool, useful for some things, not as useful for others, that’s it.

You are a prime example for a simple truth: circlejerking (“opinion bubbling”) doesn’t depend on platform.

There are a shitload of users. Yet, “everybody”…

Perhaps in their social circle, AI is the new porn. Who ever would watch that, after all?

But, more likely, everyone clapped and that was it…

Using it by choice when you specifically ask to is one thing.

When every action you take on a system is visibly being fed to the bots by default it gets intrusive and creepy.

ChatGPT alone has nearly a billion daily active users.

According to whom?

The people who run it? Who have every financial incentive in the world to inflate their numbers?

Even they aren’t that brazen.

It’s a “trust me bro” number that the commenter can later dismiss with “well it wasn’t precise, but you get my point, and if you don’t it’s you who’s boneheaded, not me”

The OP is about AI getting forced into things, which rightfully many people are pissed off about. But you’re right, ChatGPT and many other LLM tools are very popular.

The fact is though the average person is starting to replace their search engine with chatgpt, gemini, grok or whatever other llm and I have seen more and more small association using generative ai to make their posters instead of working with artist or doing it themselves.

Is this because LLMs are getting better, or because search engines are getting worse?

Because they are definitely getting worse. I get redirected to a brand new slopsite daily.

Search engines have peaked in early 2010s and hav been deteriorating ever since, becoming virtually unusable since ~2020.

Seriously, google has become unusabe without adding “site:reddit.com” to almost every search. I would like to see something like Perplexity be compared to a proper search engine - if it existed.

everything peaked in the 2010s ;)

I agree with the concern for Reddit in responses. Many times include the shit posts.

It’s because people thinks AI is like those in the movies (cause it’s been advertised so, too), omniscient and infallible. A short while ago I overheard a “imagine, even the AI didn’t know it!” which normally would be “Your search didn’t return any results”.

AI slop has just accelerated the downfall of search engines. Attention based economy, advertising and SEO are the reason you can’t find anything useful anymore. The Internet itself is broken and even if there was a good search engine it would struggle to not suffocate in the seo crap out there.

At this point, I am willing to pay a subscription for a search engine if it’s ad-free and shows me what I’m looking for.

Kagi, searxng, startpage, ddg if lazy…

Kagi is great but paid and some people have feeling about then using yandex.

There are so many solutions. People are lazy and keep using trash Google that doesnt even work. People ask me how in the world I find things. Because I am literate and I know how to use a computer. Its not hard guys.

Kagi sounds like what I’m looking for. Will consider it. Thanks for the information!

I never said llm’s or generative ai is good. I was talking about post just being wishfull thinking.

A good blogpost on this: The Enclosure feedback loop

When everybody uses AI to search, it becomes a closed system that holds all info. Doesn’t need to be productive, but it gatekeeps the knowledge that was free on the internet. It’s a self-reinforcing loop.

I work in infrastructure and what’s concerning is that younger guys are skipping learning to script to automate processes and instead just getting slop from LLMs that they have no idea what it’s doing.

Some have also relegated learning problem solving to it as well so when things go wrong, they’re clueless without it.

deleted by creator

“Enshittified”; a new descriptor for my vocabulary. 😀

Google started making their search engine worse and always pushing things that they thought would make them money. It’s not surprising people are trying something else.

I think the research can be pretty cool. Every implementation has been kinda horrible.

The research/tinkerer community overwhelmingly agrees. They were making fun of Tech Bros before chatbots blew up.

I have made the conscious decision to try and not refer to it as AI, but predictive LLM or generative mimic models, to better reflect what they are. If we all manage to change our vernacular, perhaps we can make them silgtly less attractive to use for everything. Some might even feel less inclined to brag about using them for all their work.

Other options might be unethical guessing machines, deceptive echo models, or the classic from Wh40k Abominable Intelligence.

I mostly agree. Machine Learning is AI, and LLMs are trained with a specific form of Machine Learning. It would be more accurate to say LLMs are created with AI, but themselves are just a static predictive model.

And people also need to realize that “AI” doesn’t mean sentient or conscious. It’s just a really complex computer algorithm. Even AGI won’t be sentient, it would only mimic sentiency.

And LLMs will never evolve into AGI, any more than the Broca’s and Wernicke’s areas can be adapted to replace the prefrontal cortex, the cingulate gyrus, or the vagus nerve.

Tangent on the nature of consciousness:

The nature of consciousness is philosophically contentious, but science doesn’t really have any answers there either. The “Best Guess™” is that consciousness is an emergent property of neural activity, but unfortunately that leads to the delusion that “If we can just program enough bits into an algorithm, it will become conscious.” And venture capitalists are milking that assumption for all it’s worth.

The human brain isn’t merely electrical though, it’s electrochemical. It’s pretty foolish to write off the entire chemical aspect of the brain’s physiology and just assume that the electrical impulses are all that matter. The fact is, we don’t know what’s responsible for the property of consciousness. We don’t even know why humans are conscious rather than just being mindless automatons encased in meat.

Yes, the brain can detect light and color, temperature and pressure, pleasure and pain, proprioception, sound vibrations, aromatic volatile gasses and particles, chemical signals perceived as tastes, other chemical signals perceived as emotions, etc… But why do we perceive what the brain detects? Why is there even an us to perceive it? That’s unanswerable.

Furthermore, where are “we” even located? In the brainstem? The frontal cortex? The corpus callosum? The amygdala or hippocampus? The pineal or pituitary gland? The occipital, parietal, or temporal lobe? Are “we” distributed throughout the whole system? If so, does that include the spinal cord and peripheral nervous system?

Where is the center of the “self” responsible for the perception of “selfhood” and “self-awareness”?

Until science can answer that, there is no path to artificial sentiency, and the closest approximation we have to an explanation for our own sentiency is simply Cogito Ergo Sum: I only know that I am sentient, because if I wasn’t then I wouldn’t be able to question my own sentiency and be aware of the fact that I am questioning it.

Why digital circuits will never be conscious:

The human brain has about 14 billion neurons. The average commercial API-based LLM already has about 150 billion parameters, and with FP32 architecture that’s already 4 bytes per parameter. If all it takes is a complex enough system of digits, it would have already worked.

It’s just as likely that consciousness doesn’t emerge from electrochemical interactions, but is an inherent property of them. If every electron was conscious of its whirring around, we wouldn’t know the difference. Perhaps when enough of them are concerted together in a common effort, their simple form of consciousness “pools together” to form a more complex, unitary consciousness just like drops of water in a bucket form one pool of water. But that’s just pure speculation. And so is emergent consciousness theory. The difference is that consciousness as a property rather than an effect would explain why it seems to emerge from complex enough systems.

It’s just a really complex computer algorithm

Not particularly complex. An LLM is:

$P_\theta(x) = \prod_t \text{softmax}(f_\theta(x_{<t}))$

where $f$ is a deep Transformer trained by maximum likelihood.

That “deep Transformer trained by maximum likelihood” is the complex part.

Billions of parameters in a tensor field distributed over a dozen or more layers, each layer divided by hidden sizes, and multiple attention heads per hidden size. Every parameter’s weight is algorithmically adjusted during training. For every query a matrix multiplication is done on multiple vectors to approximate the relevancy between each token. Possibly tens of thousands of tokens being stored in cached memory at a time, each one being analyzed relative to each other.

And for standard architecture, each parameter requires four bytes of memory. Even 8-bit quantization requires one byte per parameter. That’s 12-24 GB RAM for a model considered small, in the most efficient format that’s still even remotely practical.

Deep transformers are not simple systems, if they were then it wouldn’t take such an enormous amount of resources to fully train them.

You’re really breaking the shitting on AI vibe when you make it sound like the height of human capacity and ingenuity. Can I just call it slop and go back to eating glue?

You can still shit on AI, just because it’s computationally complex doesn’t make it the greatest thing ever. It still has a lot of problems. In fact, one of the main problems is its consumption of resources (water, electricity, RAM, etc.) due to its computational complexity.

I’m not defending AI companies, I just think characterizing LLMs as “simple” is misleading.

Our whole economy is geared to consume resources, we have inflation targeting to prevent aggregate demand and prices from ever falling. If you want to lower consumption need hard currency, the cheap cash that the AI’s are riding on now is most likely still Covid stimulus and QE.

And speculation. Venture capitalists think they can create money by

investingbetting money that they predict they’ll have in the future. It’s how this circular ponzi scheme between Nvidia and OpenAI is holding itself up for now.Those huge numbers that they count in their net worth don’t really exist. It’s money that’s been pledged by a different company based on money they pledged to that company in the first place. It’s speculation all the way down.

They’re hoping for a pay-off, but it’s a bubble of sunken costs kicking the can down the road for as long as they can before it bursts.

The technical implementation, computational effort and sheer volume of training data is astounding.

But that doesn’t change the fact that the algorithm is pretty simple. Deepseek is about 1,400 lines of code across 5 .py files

“Asking one’s chat bot” sounds so much less impressive than “leveraging AI”. Using the right language throws some cold water on the corporate narrative.

The men of iron are so freaking cool! They’re still around in modern 40k hiding biding their time.

Maybe one day we’ll have a whole new army of AIs in 40k!

I’m reading AI Engineering by Chip Huyen and it’s an excellent read. As a technologist, I find the topic fascinating and would enjoy building AI agents. While not a silver bullet, generative models definitely represent technological progress and can boost productivity when used correctly. It’s just that as with everything else, the billionaires want to milk it for everything it’s worth and more to the point of crashing the economy and destroying supply chains for their own selfish interests. We just can’t have nice things.

And then everyone clapped… Blah, blah, blah.

And, I do not like AI.

I hate these fake everyone clapped stories just as much as i hate AI

And everyone clapped

Obama was there, he awarded the medal of honor, my parents were proud of me, the AI chump was instantly killed

this post is real✅ and has been fact checked by true american patriots✅