Yea, it would seem the embrace from those “who should maybe know better” is based on it being the appropriate compromise to make progress in this field.

BlueSky is not just another centralised platform. It’s open source (or mostly), based on an open protocol and an architecture that’s hybrid-decentralised. The “billionaire” security, AFAICT, is that we can rebuild it with our own data should it go to shit.

This thread from Andre Staltz is indicative I think: https://bsky.app/profile/staltz.com/post/3lawesmv6ik2d

He worked on scuttlebut/manyverse for a long while before moving on a year or so ago. Along with Paul Frazee, a core dev with bsky who’d previously done decentralisation, I think there’s a hunger to just make it work for people and not fail on idealistic grounds.

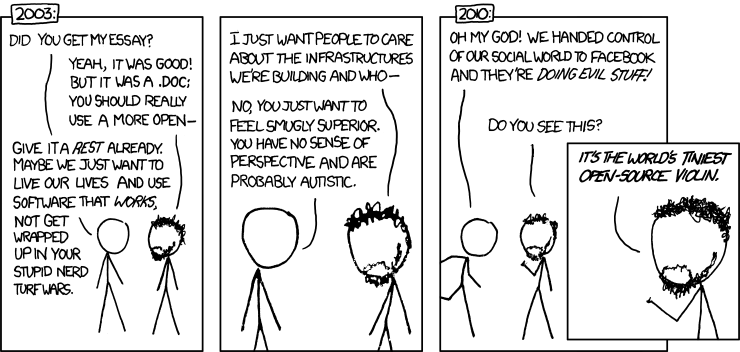

The interesting dynamic is that it seems like they’re making things that could lay lots of foundations for a lot of independent decentralised stuff, but people and devs need to actually pick that up and make it happen, and many users just want something that works.

So somewhat like lemmy-world and mastodon-social, they get stuck holding a centralised service whose success is holding hostage the decentralised system/protocol they actually care about.

For me, the thing I’ve noticed and that bothers me is that much of the focus and excitement and interest from the independent devs working in the space don’t seem too interested in the purely decentralised and fail-safe-rebuilding aspects of the system. Instead, they’re quite happy to build on top of a centralised service.

Which is fine but ignores what to me is the greatest promise of their system: to combine centralised and decentralised components into a single network. EG, AFAICT, running ActivityPub or similar within ATProto is plausible. But the independent devs don’t seem to be on that wavelength.