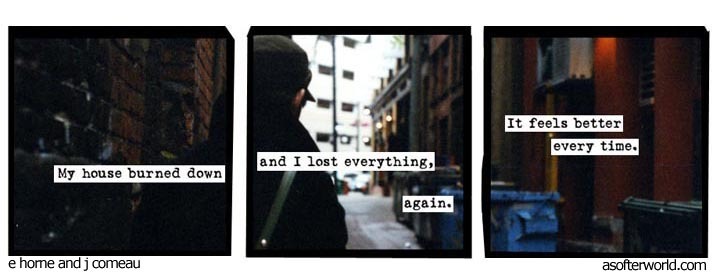

That’s fair, but comics like the above (which are endemic to this sub) aren’t about the extremes and are about standard human behaviours.

It’d be like someone seeing me double check the door lock at my house and saying “You must have OCD.”

I also find it mildly concerning that someone may see these memes / comics and self-diagnose themselves with a mental illness that they do not have since self-diagnosis (and wanting to belong to a group) is a massive issue in the internet age.

My insane conspiracy theorist mother in law would love this.

Sadly.